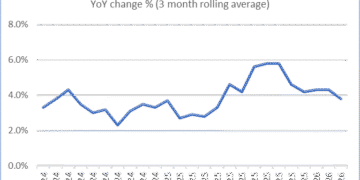

Fort Lauderdale, FL, March 16, 2026 –(PR.com)– rolv, LLC releases breakthrough benchmarks for rolvsparse©, a patent-pending software compute primitive that delivers 20–177× AI inference speedups and up to 99.5% energy reduction on unmodified models — compared to vendor optimized dense and sparse libraries. All energy numbers use real hardware power readings (live polling every 50 ms). No hardware swaps, no retraining, no precision loss. Results are independently validated by the University of Miami Frost Institute for Data Science and Computing, with bit-identical SHA-256 hashes across all platforms.rolv, LLC’s rolvsparse© achieves 20–177× speedups with 98–99.5% energy savings on real Hugging Face production models, using live hardware power readings. Even 0% sparse gets 63×. A used server CPU now matches a $40,000 high-end GPU. Software-only. Independently validated. Five patents pending.“The AI world is investing heavily in new chips to address computational challenges. The core idea for rolvsparse came to me on a bike ride in Fort Lauderdale in May 2025. Everything since — patents, prototypes, self-taught stacks, benchmarks and university validation — has been relentless execution until today. Why build more data centers when your existing ones can achieve 83× faster performance and 99% greener operations?”— Rolv E. Heggenhougen, Founder & CEO, rolv.aiIndependently Validated Benchmark Highlights — Compared to Vendor Optimized Dense and Sparse Libraries (Real Hardware Power Readings)

Llama 4 Maverick (real MoE weights, throughput): 20.7× speedup, 81.5% energy reduction

Llama 4 Maverick (time-to-first-token): 177× speedup

Llama 4 Scout (real weights): 81.7× speedup, 98.8% energy reduction

DeepSeek-R1 (256 MoE experts, real weights): 78.9× speedup, 98.7% energy reduction

Mixtral 8×22B (all 56 layers, real weights): 55.1× speedup, 98.2% energy reduction

Qwen2.5-72B-Instruct (real weights): 50.5× speedup, 91.4% energy reduction

Qwen3-235B-A22B (real weights): 22.4× speedup, 95.5% energy reduction

Claude 3.5-class proxy (production-scale FFN): 83× speedup, 98.8% energy reduction

Dense matrix (0% sparsity, 20k×20k on high-end GPU): 63× speedup, 98.42% energy reduction

Finite Element Solver (80% sparse, structural sim): 163× speedup, 99.5% energy reduction

Netflix Prize RecSys (real sparse GEMM): 3.1× speedup, 67.7% energy reduction

CPU vs. GPU (used server CPU vs. high-end GPU at ≥80% sparse)*: CPU matches or exceeds

*A used server CPU (typically available for $500–$900 on the secondary market) running rolvsparse© matches or exceeds a $30,000 high-end GPU at qualifying sparsity levels. All energy figures are live hardware readings, not estimates. How rolvsparse© WorksEven vendor optimized dense and sparse libraries process zeros — the “zero-FLOP bottleneck.” rolvsparse© restructures arithmetic at the primitive level, skipping meaningless ops while guaranteeing exact outputs (SHA-256 verified, e.g., deterministic hash: 8dbe5f139fd946d4cd84e8cc612cd9f68cbc87e394457884acc0c5dad56dd8dd). It even achieved 63× at 0% sparsity vs vendor dense operator on high-end GPU. Deploy as a drop-in software layer: one library for all hardware, deterministic/reproducible results. From the open verifier script (download at rolv.ai), users generate baselines; rolv returns personalized comparison reports with real power readings.Market ImpactAI data centers could hit 9% of U.S. electricity by 2030; hyperscalers have $700B+ in capex commitments. rolvsparse© slashes that: 98–99.5% less energy (real readings), higher throughput from existing infra, and edge viability (mobile processors: 70× sparse, on-device inference). CPU economics flip the script — enhanced hardware independence.Applications Beyond AI· LLMs/MoEs/Agents: Real-time TTFT for reasoning (177× on Llama 4)· Edge/Mobile: Battery savings on ARM-based devices· Engineering/Sim: 163× on FE solvers· RecSys/Finance: 3.1× on Netflix-style sparse· Sustainability: Cut Scope 2 emissions by 99%· Sovereign AI: Maximize national hardware outputValidation & IPUniversity of Miami Frost Institute confirmed reproducibility via SHA-256 on every model above. Five patents protect the core innovations. Full JSON reports and methodology (including power details) in “ROLV Validation Test.pdf” at rolv.ai.Availabilityrolvsparse© is available today. Download the verifier at https://rolv.ai/ROLV%20Validation%20Test.pdf, run on your hardware, email JSON to rolv@rolv.ai for your free personalized comparison report. See the full benchmark barrage on Substack (rolv.substack.com) or rolv.ai/benchmarks.pdf. Email rolv@rolv.ai to schedule a live demonstration.About rolv, LLCFort Lauderdale-based rolv, LLC develops rolvsparse© — software that restructures matrix math for 20–177× speedups and 99.5% energy savings on any hardware. Founded by Rolv E. Heggenhougen, whose breakthrough idea for speeding up matrix arithmetic arrived on a bike ride in May 2025 and has been developed into a fully validated, hardware-agnostic compute primitive through dedicated work until today. Cryptographically verified, university validated.

University of Miami Validation

Media Contact:

Rolv E. Heggenhougen, CEO

rolv@rolv.ai

| 954.253.4443 | rolv.ai