San Francisco, CA, March 27, 2026 (GLOBE NEWSWIRE) — 0G Labs today published a technical framework for verifying decentralized AI training, addressing the growing trust gap as distributed models scale toward frontier performance. The framework combines Trusted Execution Environments (TEEs) with economic incentive alignment to provide cryptographic proof that every training step executed correctly.

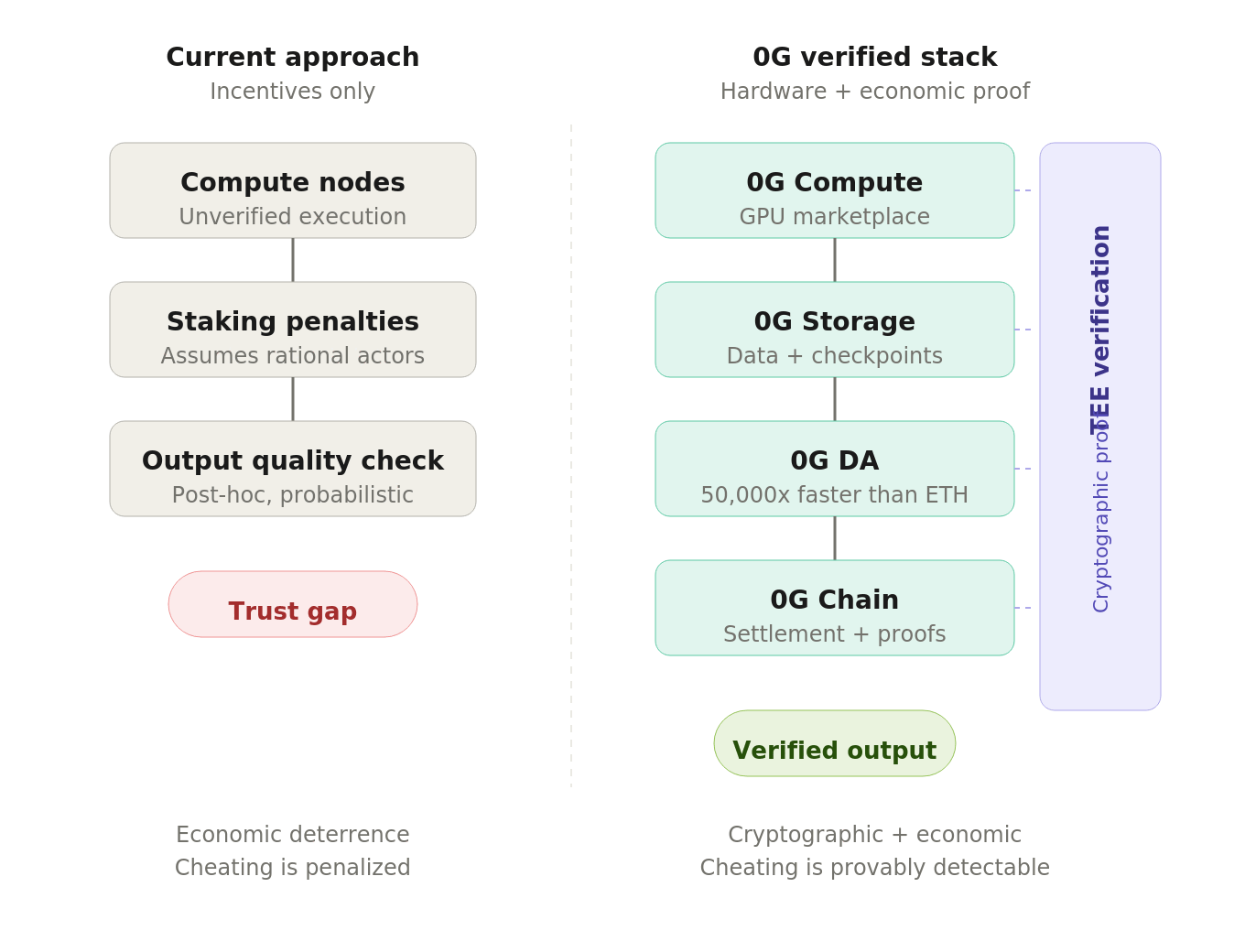

0G Labs’ four-layer verified infrastructure adds hardware-level cryptographic proof to decentralized AI training, moving beyond economic incentives alone.

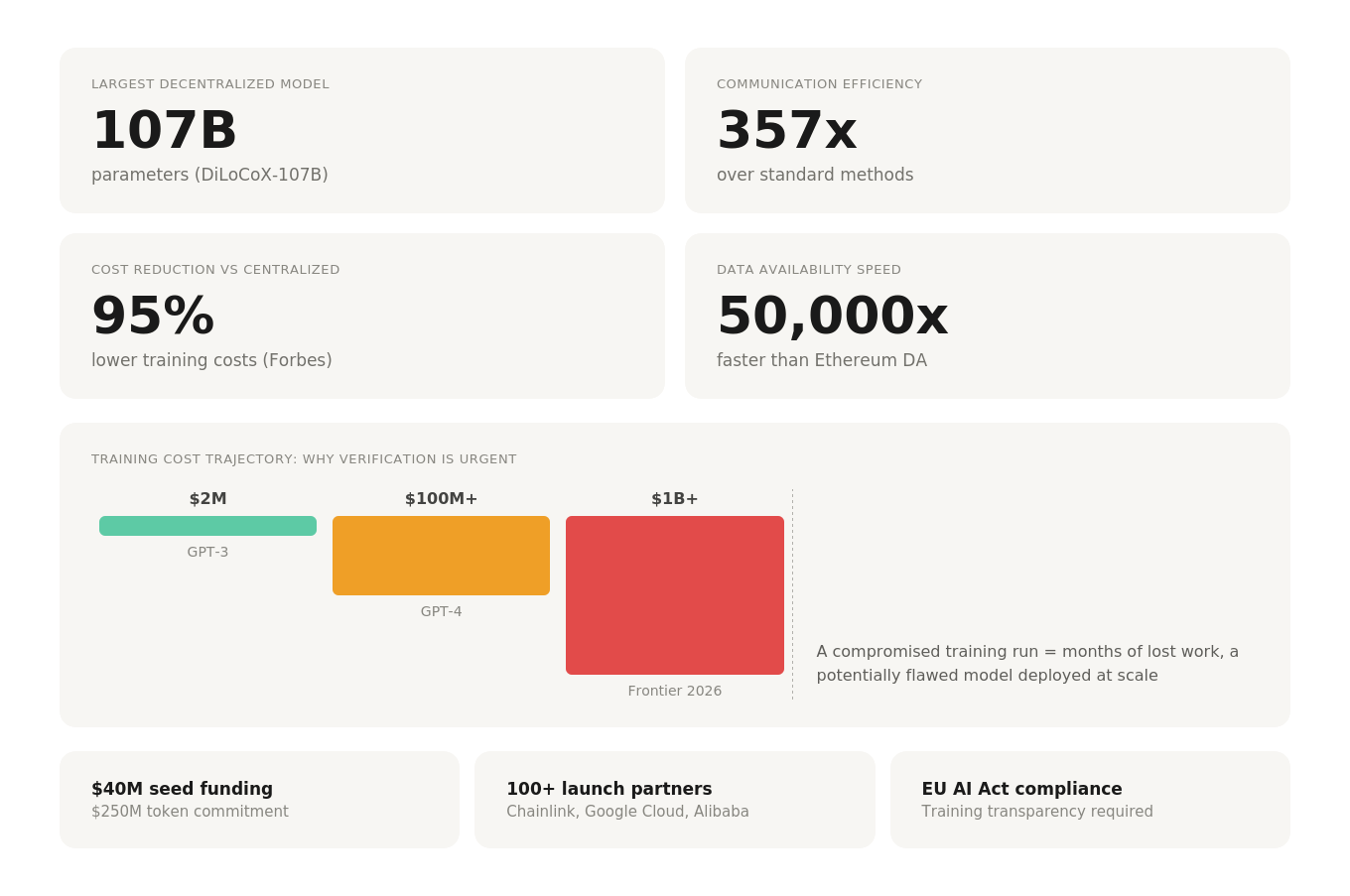

The publication follows 0G’s recent demonstration of DiLoCoX-107B, the world’s largest decentralized AI model at 107 billion parameters, which achieved 357x communication efficiency over standard methods on ordinary 1 Gbps internet connections. As decentralized training scales, the question of how to verify that distributed nodes performed honest work becomes a security concern, not just a technical one.

“Training a model is one problem. Trusting how it was trained is a different problem entirely,” said Ming Wu, CTO of 0G Labs. “When training runs cost hundreds of millions of dollars and the resulting models handle financial transactions and medical decisions, ‘the incentives should keep people honest’ is not sufficient. The hardware needs to prove it.”

The Verification Gap

Current approaches to decentralized AI training rely primarily on economic incentive alignment. Bittensor’s Covenant-72B, trained by 70+ independent contributors, uses staking penalties to discourage cheating. Nodes that produce poor gradients lose tokens. This approach has demonstrated real results but assumes rational actors and measurable output quality.

0G’s framework adds a hardware verification layer. Every compute operation runs inside a TEE, a hardware-isolated processor region where code executes in an encrypted state. The TEE generates cryptographic attestations proving that specific code ran on specific data and produced a specific result. These attestations are verifiable by any third party without trusting the node operator.

The two approaches are complementary, not competing. Economic incentives handle participant coordination and marketplace dynamics. TEE verification handles computation integrity. 0G’s architecture supports both layers, giving builders the flexibility to match verification levels to their risk tolerance.

Why Now

Three factors make verification urgent rather than theoretical:

Training costs have crossed $100 million and are heading toward $1 billion. A compromised training run at this scale represents months of lost work and a potentially flawed model. AI agents are already executing financial transactions and medical recommendations, making training integrity directly relevant to output safety. The EU AI Act now requires transparency about how high-risk AI systems are developed, turning verification from a technical question into a compliance requirement.

“As AI models become more powerful and widely deployed, the integrity of their training process becomes a security question, not just an engineering one,” said Jake Salerno, VP of GTM at 0G Labs, who will present the framework at EthCC Cannes on April 1 in a keynote titled “Why Verification Should Be a First-Class Citizen in AI.”

0G’s Full-Stack Approach

Unlike projects that address only one component of the AI pipeline, 0G provides the complete infrastructure for verified decentralized AI:

- 0G Compute provides the decentralized GPU marketplace where training and inference jobs run with TEE verification

- 0G Storage handles training data, model checkpoints, and gradient logs with high-throughput decentralized storage

- 0G DA ensures data availability for training coordination, 50,000x faster and 100x cheaper than Ethereum’s DA layer

- 0G Chain settles training contributions, staking, and verification proofs onchain

The DiLoCoX-107B retrain, announced earlier this week, is the first large-scale application of this verified training infrastructure. The retrain uses TEE-backed verification throughout, with all checkpoints documented and publicly auditable. According to Forbes, 0G’s approach achieves up to 95% cost reduction compared to centralized alternatives.

The verification framework is detailed in a technical blog published today at 0g.ai/blog/why-verification-matters-decentralized-ai-training.

About 0G Labs

0G Labs is the creator of the Blockchain for AI Agents, and one of the best-funded AI infrastructure projects in Web3 with $40 million in seed funding and a $250 million token commitment from investors including Hack VC, Delphi Digital, OKX Ventures, Samsung Next, and Bankless Ventures. 0G’s Aristotle Mainnet, launched in September 2025, powers a full-stack AI infrastructure. This includes an EVM-compatible L1 chain, decentralized compute, distributed storage capable of up to 2 GB per second, and a data availability layer that is 50,000 times faster and 100 times cheaper than Ethereum DA.

The company has 100+ launch partners including Chainlink, Google Cloud, and Alibaba Cloud. The $0G token is listed on Binance, OKX, Bybit, and Gate.io.

About 0G Foundation

The 0G Foundation drives innovation and growth within the 0G ecosystem, maintaining the Blockchain for AI Agents infrastructure fueled by $0G. The Foundation supports ecosystem development, funds grants, and enables community governance to advance the mission of making AI a public good.

0G Labs’ decentralized AI infrastructure by the numbers: 107B parameters trained, 357x communication efficiency, and 50,000x faster data availability than Ethereum.

Press Inquiries